Documentation Index

Fetch the complete documentation index at: https://honeydew.ai/docs/llms.txt

Use this file to discover all available pages before exploring further.

Honeydew Managed Pipelines

Honeydew is a repository of shared business logic such as standard metrics and entity definitions.

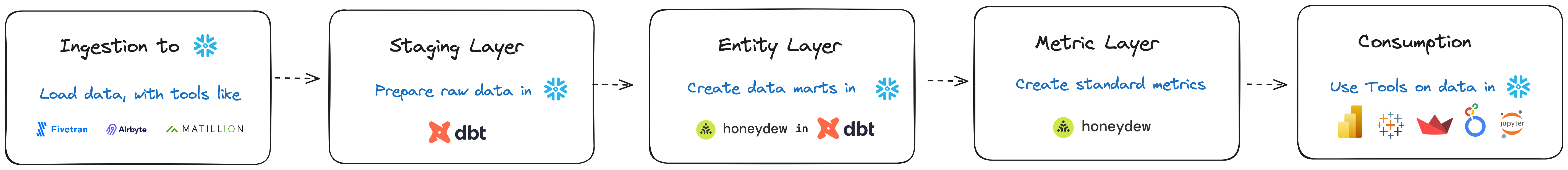

Pipeline Stages

A typical data pipeline of Honeydew with Snowflake and dbt consists of the following stages:- Ingestion: Bringing raw data into Snowflake. Usually, with a tool such as Fivetran or similar.

-

Staging: An incremental periodic process that transforms raw data into analytic-ready granular data. The following transformations are typically performed at this stage:

- Data cleanups, normalization, deduplication

- Data merging, change data capture deconstruction

- Dimensional modeling, incremental updates

-

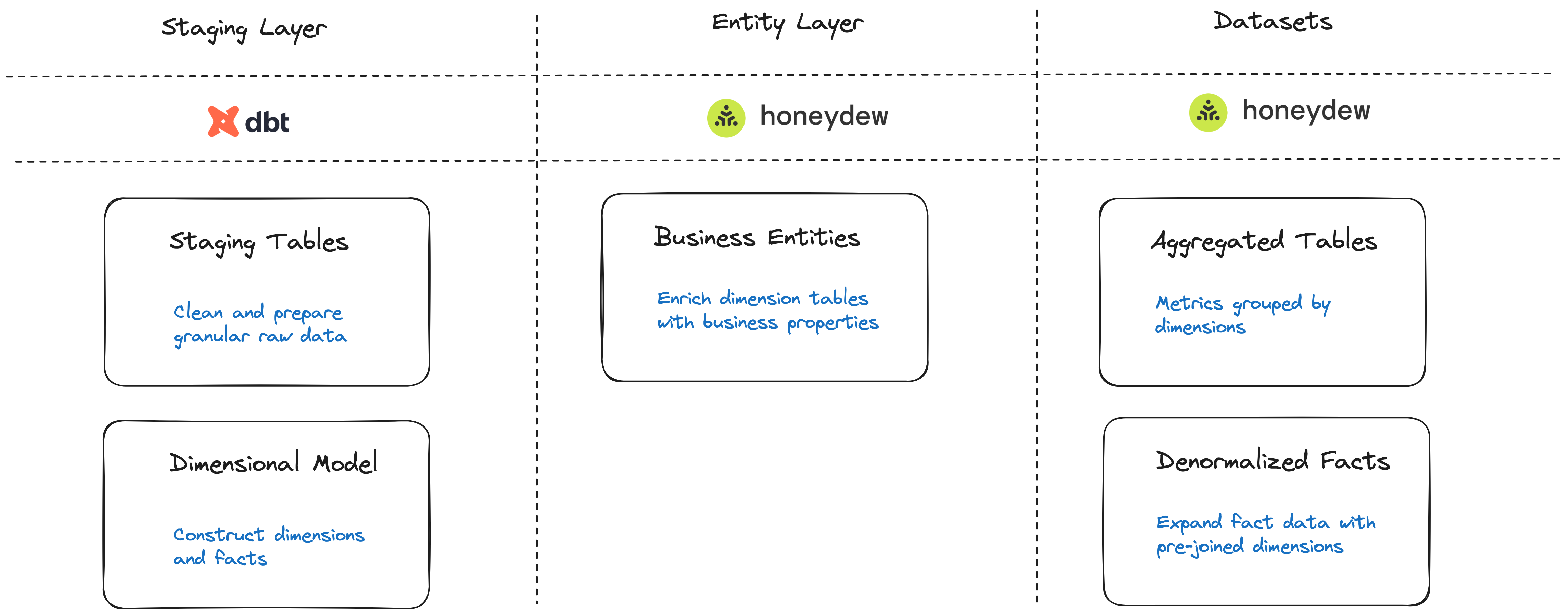

Entity Layer (Data Mart): A periodic process that transforms granular data into a domain data mart with business entities, metrics and aggregated datasets.

The following transformations are typically performed at this stage:

- Business Entities: Extend granular data with logic properties, such as

- Calculated columns (build an “Age Group” category out of “date of birth”)

- Aggregated properties (first order data, total revenue per customer, etc.)

- Metric Datasets: Build datasets such as:

- Aggregated tables (“Monthly KPIs”)

- Denormalized fact tables

- Business Entities: Extend granular data with logic properties, such as

- Metric Layer: On-the fly transformation of user queries to JOINs and aggregations over entity tables using standard metrics like “Revenue” or “Active Customer Count”.

Example

- Ingestion: raw customer tables and raw order events are loaded into Snowflake.

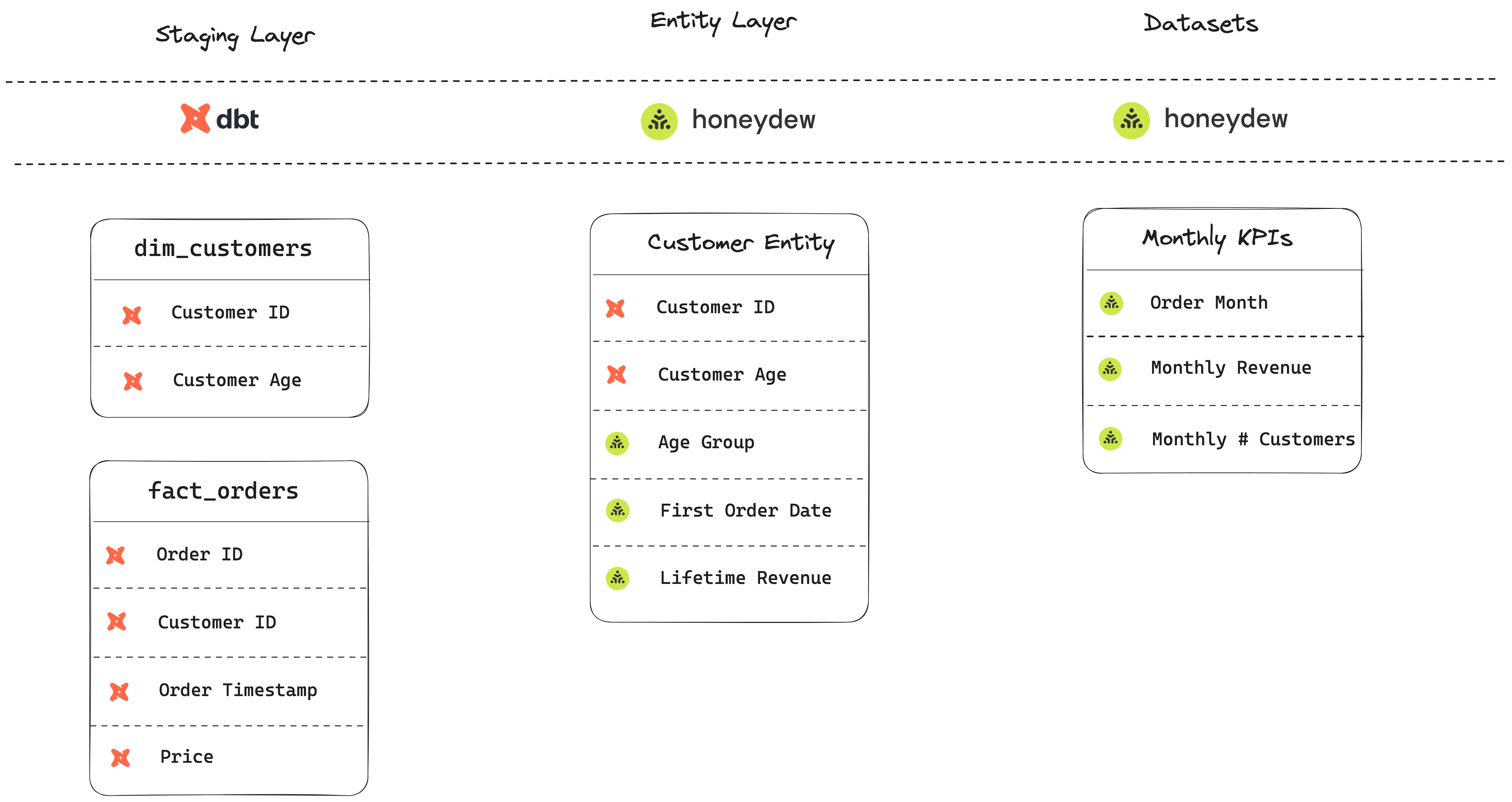

- Staging: dbt models that transform those into two tables:

dim_customers: A customer dimension table, with a row per known customer. Dbt preparation includes clean-up and merging from raw data.fact_orders: An incremental model, with a row per unique order event. Dbt preparation includes handling backfilling and time stamp conversion.

- Data Mart: Honeydew-managed models:

- Entity models, such as

customers: A customer entity table. Includes additional properties:- “Age Group” - a CASE WHEN that maps ages to categories

- “First Order Data” - an aggregation of order facts that finds first order

- “Lifetime Revenue” - the

revenuestandard metric by customer

- Dataset models, such as

monthly_kpis: An aggregated table:- “Order Month” - based on order timestamp

- “Monthly Revenue” - the

revenuestandard metric by order month - “Monthly # Customers” - a count of customers active in a month

- Entity models, such as